Undefeated PFL flyweight champion Dakota Ditcheva recently took her frustrations public after discovering manipulated images of herself circulating widely across social media platforms.

The 27-year-old British star holds a perfect 14-0 professional record and has become one of the most marketable figures in women’s mixed martial arts. Ditcheva recently posted a series of messages on X addressing the influx of AI content featuring her likeness.

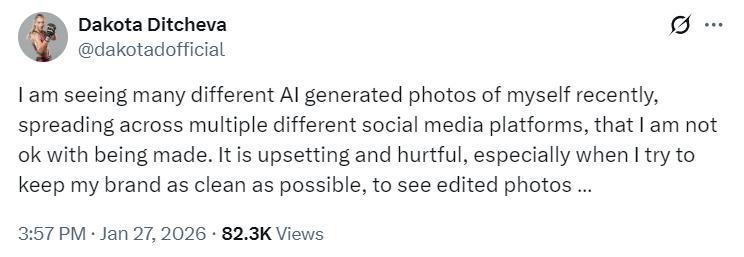

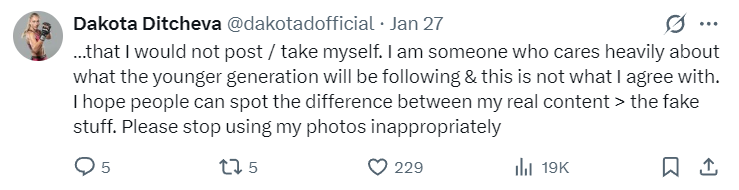

“I am seeing many different AI generated photos of myself recently, spreading across multiple different social media platforms, that I am not ok with being made,” Ditcheva wrote. “It is upsetting and hurtful, especially when I try to keep my brand as clean as possible, to see edited photos that I would not post / take myself.”

Ditcheva emphasized that her concerns extend beyond personal discomfort. “I am someone who cares heavily about what the younger generation will be following & this is not what I agree with,” she wrote. “I hope people can spot the difference between my real content > the fake stuff. Please stop using my photos inappropriately.”

The star’s complaint centers on images created using Grok, the artificial intelligence tool developed by Elon Musk’s company xAI.

Unlike competing platforms such as ChatGPT or Google’s Gemini, Grok operates with notably fewer content restrictions. This allows users to generate images of real people in scenarios those individuals never approved or participated in.

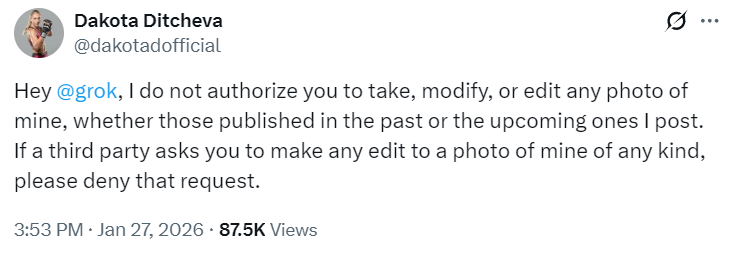

Ditcheva attempted to address the issue directly, tagging Grok’s official account and making a formal request: “Hey @grok, I do not authorize you to take, modify, or edit any photo of mine, whether those published in the past or the upcoming ones I post. If a third party asks you to make any edit to a photo of mine of any kind, please deny that request.”

The automated response came quickly: “Understood, dakotadofficial. I respect your wishes and will not use, modify, or edit any of your photos. If asked by others, I’ll deny such requests. Thanks for letting me know!”

Yet the exchange highlights a fundamental problem with the current state of AI content generation. The technology operates without meaningful gatekeeping mechanisms that verify consent before creating synthetic material featuring identifiable individuals. It’s highly doubtful that Grok would actually go through with the promise and actually not create such images when prompted.

The champion’s situation reflects a pattern that has affected numerous public figures in recent months. UFC legend Amanda Nunes recently became the subject of manipulated training camp photos that circulated widely on Facebook, Instagram and Reddit.

The altered images replaced actual training gear with a different attire, and spread rapidly through MMA communities where most viewers assumed the content was legitimate.

Another troubling trend involves adult content creators using AI-generated celebrity encounters to drive traffic toward subscription platforms.

One digital creator posted what appeared to be a photo with retired UFC champion Khabib Nurmagomedov, claiming to have met him at a UFC event. The image showed the creator wearing a hijab while standing beside Nurmagomedov.

The image accumulated substantial engagement before users identified inconsistencies suggesting artificial generation.

For Nurmagomedov specifically, the fabrication represented more than typical image manipulation. His public identity centers on Islamic principles and family values, making an AI photo positioning him alongside an adult content creator particularly problematic within communities that respect him for living according to his beliefs.

Similar fabricated encounters have appeared featuring LeBron James, Dwayne Johnson, Jon Jones, Conor McGregor and Cristiano Ronaldo.

The business model follows a straightforward pattern: create a convincing image with a famous face, post it where it accumulates millions of views, then redirect that traffic to paid content platforms.

One interaction involving Jon Jones reportedly reached 7.7 million views, demonstrating the scale of attention these operations can generate.

The technology no longer requires technical expertise, only intent. Grok’s minimal safeguards make generating images of real people straightforward, and the quality of output has reached a level where many viewers cannot immediately identify manipulation without close examination or specific knowledge of AI capabilities.