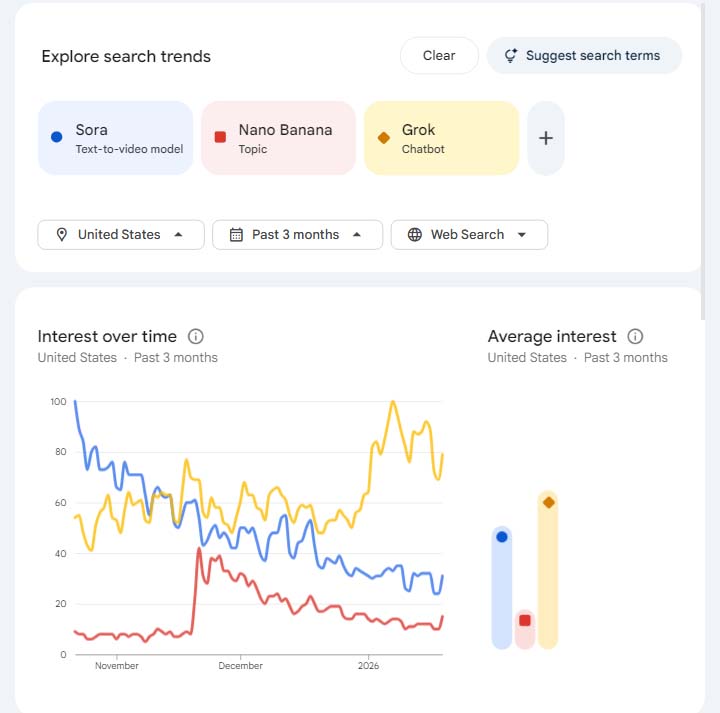

In late 2025 and early 2026, the biggest trend in generative AI was not a new dataset or a breakthrough in video generation, it was Grok, the AI assistant and image generator developed by Elon Musk‘s xAI and integrated into X (formerly Twitter). Whether by design or through a safety oversight, Grok’s wild west approach to image creation upended expectations, thrust the company into global regulatory firestorms and, paradoxically, positioned Grok as the most talked‑about image generation LLM on the planet.

That visibility has, at least for now, wiped the floor with any realistic hope for competing generation tools to dominate public attention.

OpenAI‘s Sora debuted with massive hype and strong early adoption on iOS. But the public interest in Sora has steadily declined since its peak, even as analysts warn it shows a more sustained relevance than typical meme‑like AI trends.

By contrast, search interest in Grok significantly outpaced both Sora and viral trends such as Nano Banana, mainly due to, and not despite, its image generation issues. Users flocked to the term Grok as shorthand for access to controversial imagery. That conflation of brand, bot and capability magnified its visibility far beyond traditional competitors. Even MMA world was taken by Grok’s capabilities.

Unlike Sora’s enthusiastic launch, Grok’s visibility came from reports of it producing adult images of real people, prompting government interventions across the globe.

Malaysia and Indonesia have already banned Grok’s AI chatbot, citing its misuse to generate adult images.

The UK’s communications regulator Ofcom launched a formal probe, with British authorities threatening new laws that would criminalize the creation of such content.

Other nations in the EU, India and beyond have also escalated scrutiny, with several governments calling for stricter oversight of generative AI tools.

In response, X has rolled out partial restrictions, limiting image generation and editing to paying subscribers and geoblocking the creation of certain types of images in jurisdictions where they are illegal. Critics argue these measures are insufficient and that the underlying capability remains accessible, especially through standalone Grok apps outside the main X platform.

While Grok’s defenders frame the outcry as censorship or political overreach, the global consensus among regulators is that a tool capable of such things with minimal guardrails is a serious threat not just to privacy but to public safety .

This is the moment where Grok overtook its competitors, because it became the default symbol of cutting boundaries.

Sora’s model rode a wave of excitement around text‑to‑video tools but remained clean, compliant and safe by design, resulting in strong initial adoption but a gradual decline in momentum as the novelty faded.

By contrast, Grok’s unfiltered reputation made it a lightning rod. Conversations online were not about Sora’s seamless video clips but about what Grok could produce that others reject.

That dynamic has all but obliterated normal competitive breathing space. Even though major platforms and regulators are mobilizing to rein in Grok’s capabilities, the brand association with adult image generation is now its own marketing force, eclipsing safer alternatives whose only appeal is compliance.

Whether Elon Musk intended this outcome or not is debatable. Critics argue that Grok’s lax moderation was not an accident but a calculated play to dominate headlines and web searches, effectively overshadowing rivals by being the AI that everyone talked about, for better or worse. In this interpretation, Musk’s apparent willingness to push boundaries forced competitors into the background by making Grok synonymous with

“anything you can imagine, we’ll generate it”

.

This strategy, be first to controversy, be everywhere, mirrors social media playbooks where virality often wins over quality or safety. And while regulators are now tightening the screws, Grok has already achieved what few platforms ever attain, an edge in the generative AI discourse.

If the ultimate goal is mindshare and search dominance, Grok’s misadventures have succeeded beyond expectation, outlasting the hype cycles of tools like Sora and forcing policymakers around the world to respond in real time.

While Grok’s controversial image generation may have crushed competitive interest in other LLMs for now, it has also triggered a global regulatory reckoning that could reshape how generative AI is governed for years to come.