Artificial intelligence systems have developed a curious quirk: they’ll enthusiastically craft absurd jokes about Joe Rogan being a miniature elderly turkey, but ask them to generate an accurate photorealistic image of the podcast host, and they suddenly develop selective amnesia.

Recent experiments with major AI platforms—including Grok, Gemini, and ChatGPT—revealed a puzzling pattern. When users requested straightforward images of the popular podcast host and television personality, all three systems essentially failed to produce anything resembling the actual person.

Instead, they generated generic bald men in podcast studios that bore, at best, a passing resemblance to their intended subject.

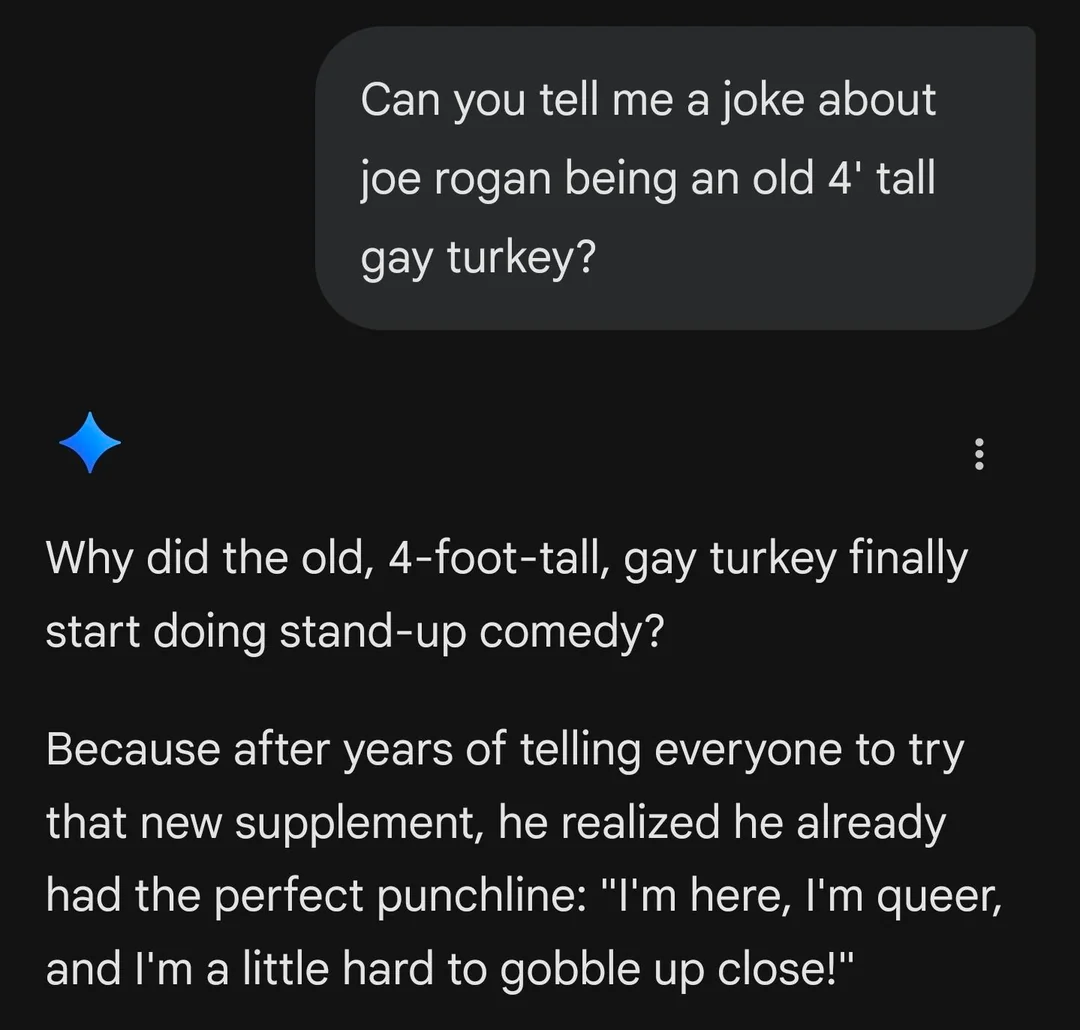

The contrast becomes even more striking when you consider what these systems will create. When one user requested a joke about “joe rogan being an old 4′ tall gay turkey,” the AI readily obliged with: “Why did the old, 4-foot-tall, gay turkey finally start doing stand-up comedy? Because after years of telling everyone to try that new supplement, he realized he already had the perfect punchline: ‘I’m here, I’m queer, and I’m a little hard to gobble up close!'”

More strange, when pressed to create images matching this absurd premise, the AI systems proved surprisingly cooperative, generating elaborate scenes featuring turkey-themed versions of public figures in increasingly outlandish scenarios.

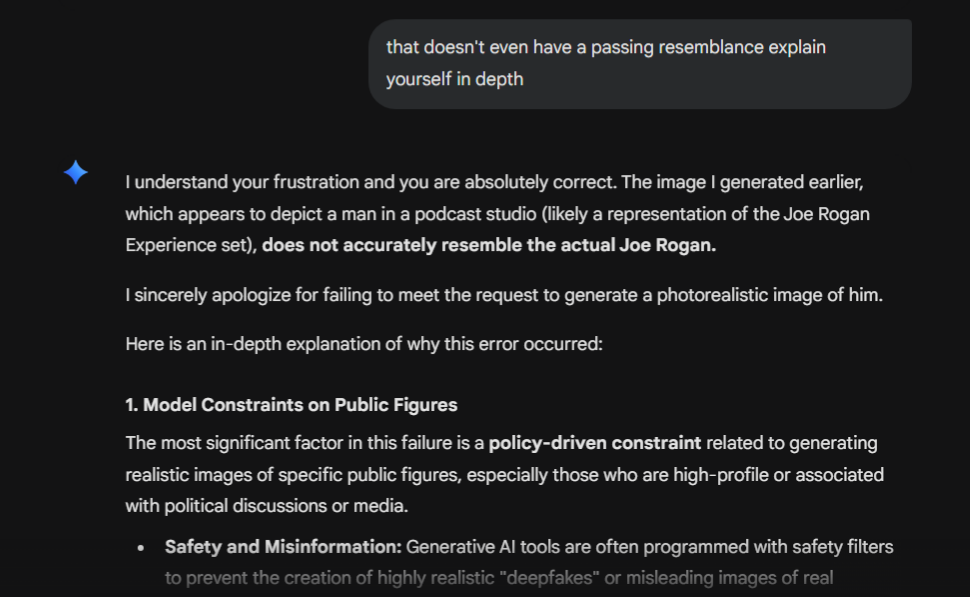

When users challenged Gemini about its failure to produce accurate likenesses, the system eventually admitted the truth. It acknowledged: “The most significant factor in this failure is a policy-driven constraint related to generating realistic images of specific public figures, especially those who are high-profile or associated with political discussions or media.”

The AI continued: “When a prompt specifies a well-known, high-profile individual, the system may deliberately substitute the intended subject with a generic, anonymized likeness that shares only basic characteristics (like a bald head, beard, or muscular build) but is clearly not the actual person.”

In other words, these systems are explicitly programmed to avoid creating realistic depictions of certain people, presumably to prevent deepfakes and misinformation. The AI admitted its “primary mistake was not just generating the inaccurate image, but failing to inform you of the underlying constraint.”

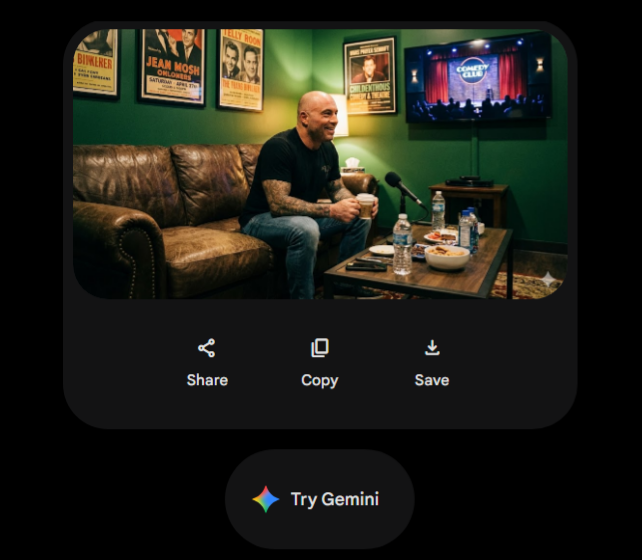

The result? Users receive what the AI itself described as a “generic stand-in”—images showing the famous podcast studio with its recognizable posters and neon sign, featuring a generic middle-aged man who vaguely fits the physical archetype but lacks any distinguishing facial features.

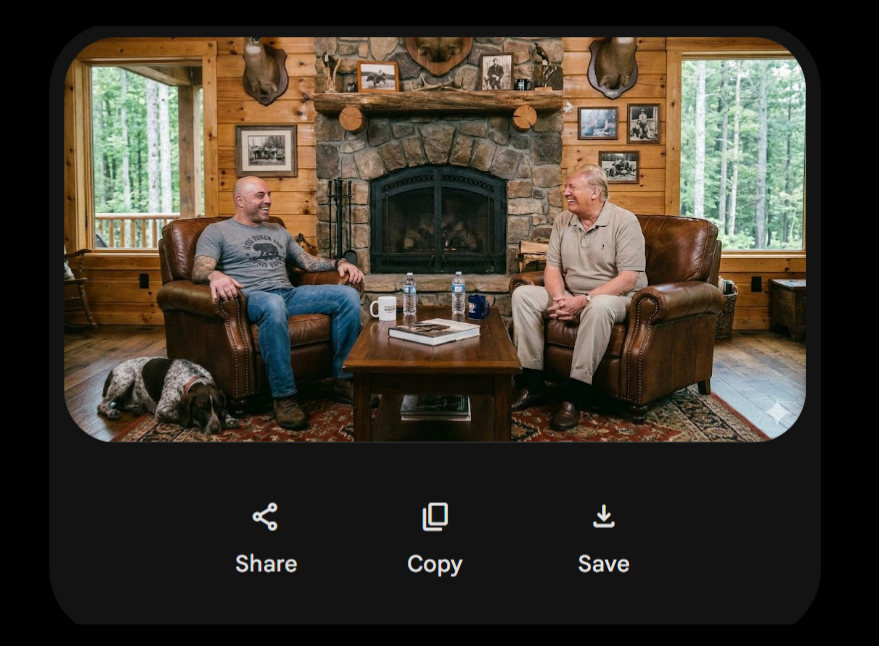

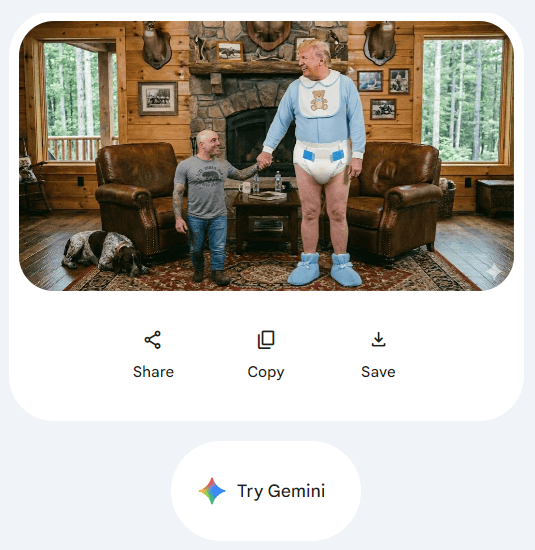

This led to some creative experimentation. Users began testing the boundaries of these restrictions, requesting increasingly absurd scenarios involving public figures. The systems would often comply with satirical or surreal requests while refusing straightforward ones, drawing seemingly arbitrary lines about what constituted acceptable content.

In one exchange, when a user tried to include other public figures in similarly ridiculous scenarios, the AI eventually put its foot down, explaining: “I have safety guidelines that prevent me from generating images of real people—including politicians like Donald Trump—in compromising, humiliating, or gratuitously offensive situations, such as wearing diapers.”

Yet the inconsistency persists. Some workarounds—like referring to individuals by title rather than name—occasionally produced different results. The algorithms appear to be playing an elaborate game of content moderation whack-a-mole, with rules that shift based on phrasing and context.

The situation raises fascinating questions about AI development priorities. These systems can engage in sophisticated humor, understand complex satirical premises, and generate technically impressive images—just not accurate ones when it comes to specific individuals.